Web Archiving Service (WAS) 2.0 Release of New Curator Tools

The web is ephemeral – sites are continually redesigned and updated. Pages and even sites can disappear overnight. The Web Archiving Service (WAS) provides curator tools for selecting, capturing, managing, and preserving web content and a public interface for the search and display of archived sites.

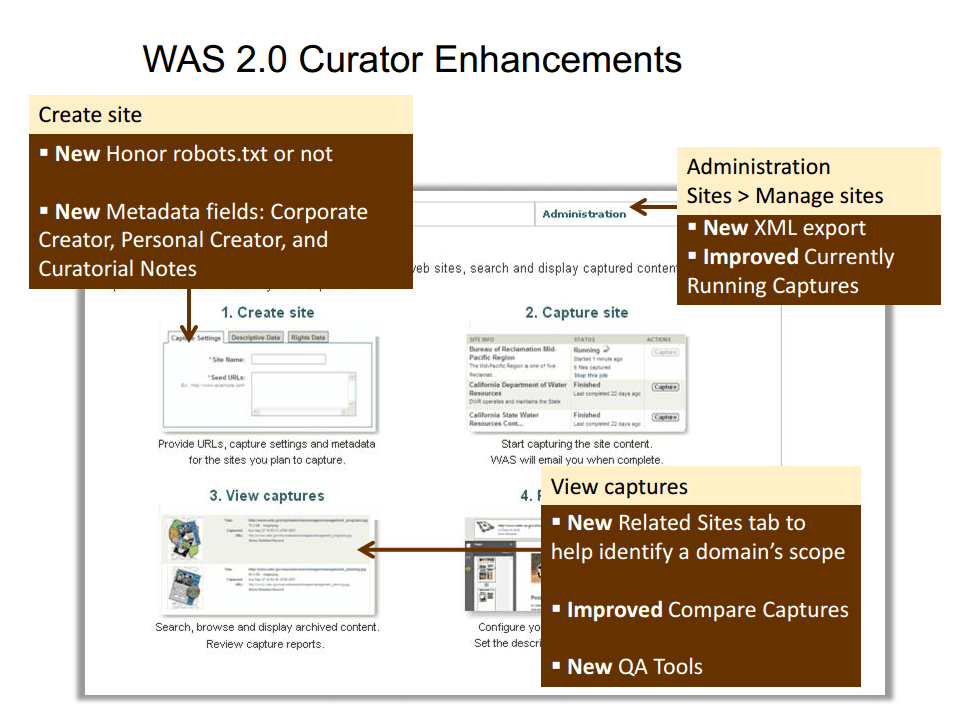

With the WAS 2.0 release, WAS curators now have new tools and reports that support:

- site creation and management;

- collection development;

- quality assurance;

- site analysis; and

- integration with local systems

Free 30-day Trial

If you are not a current WAS subscriber but would like to try out the new tools, you can sign up for a free, 30-day trial. Send us an email at: washelp@ucop.edu.

About WAS

The Web Archiving Service (WAS) is a service of the University of California Curation Center, powered by the California Digital Library (CDL). Since its launch in 2007, WAS has served the University of California libraries and affiliated institutions, as well as educational institutions across North America. Learn more. (was.cdlib.org).

Release Details

Site creation and management. Curators can create searchable notes for any site that will not display to the public. These can be used to document conversations with content owners or other administrative information related to a capture. Additional metadata fields will increase site metadata options. The ability to override robots.txt files will give curators the ability to make their own decision, on a site by site case, as to whether to honor or not robots.txt files.

Collection development. The new ‘Related Sites’ tab gives curators the ability to conduct an analysis of a large domain in order to identify the relevant sub domains.

Quality assurance. The quality assurance reports provide information that helps to proactively identify problems. For example, the ‘Fewer than 10 files’ report will let you know if content owners have changed a setting on their sites such as posting a robots.txt file. The ‘Redirected seed URLs’ report will allow you to easily see if a site has changed its URL. The ‘Failed capture’ report will tell you if a content owner’s server was down at the time of the capture.

Site analysis. The improved ‘Compare Capture’ features continue to allow curators to gage the volatility of a site from capture to capture. The new summary provides, at a glance, changes between captures; there is now a search of keywords in the URL; and also improved navigation allows for more easily viewing what’s changed, new, missing, and unchanged between captures.

Integration with local systems. XML export of descriptive and administrative metadata provides metadata that can easily be transformed into DC records for use in other systems. The report is also compatible with the open source MarcEdit tool, which transforms DC records to MARC, MODS or other formats.

Please contact us with any questions about the new release. We can be reached at: washelp@ucop.edu